An audit of how cutting-edge technology transforms cultural memory into verifiable, defensible evidence.

E-E-A-T Audit Classification

1. Beyond the Surface: The Data Substrate

Most people think of a story as something a person writes, records, or tells out loud. Forensic digital storytelling challenges that assumption at the root. Every digital artefact sits on top of a hidden layer of machine-readable data. That layer, what researchers call the data substrate, holds timestamps, GPS coordinates, compression signatures, and device identifiers. It is the biography of the object, written in code rather than prose. When you treat the data substrate as evidence rather than background noise, a new kind of narrative becomes possible.

You are no longer simply describing what you see. You are auditing what the data proves. That distinction changes everything about how we approach cultural memory, historical accountability, and the legal weight we assign to digital records. It is the difference between a story and a testimony.

For practitioners working at the intersection of heritage and technology, this shift in perspective is not academic. It is operational. And it begins with a discipline that most heritage institutions have been slow to adopt: treating every digital file as a potential forensic exhibit.

2. Metadata Provenance: The Narrative Fingerprint

How Forensic Digital Storytelling Uses Hidden Data to Prove a Story’s Origin

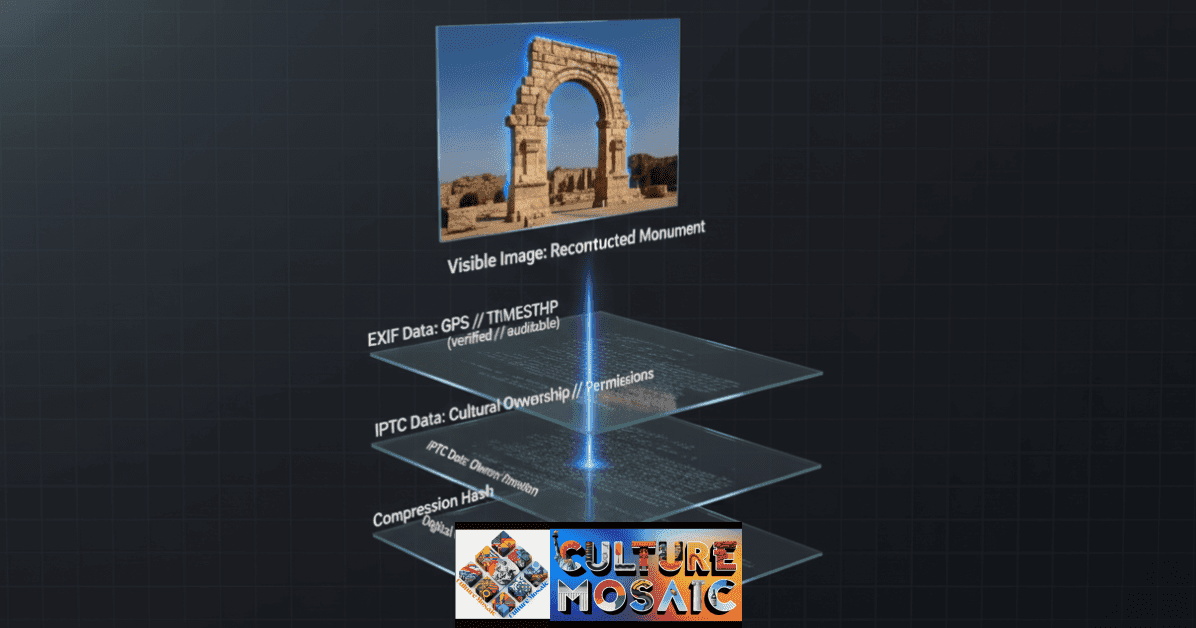

Every digital file carries a biography you cannot see with the naked eye. Camera metadata records the exact second a shutter opened, the GPS coordinates of the photographer, and the precise model of the device used. Archival scans embed the calibration settings of the scanner and the identity of the operator. This collection of hidden data is metadata provenance, and in forensic digital storytelling it functions as a narrative fingerprint.

By auditing GPS tags, timestamps, and sensor data from fragmented historical photographs, forensic analysts can reconstruct the exact moment a cultural site was recorded, altered, or destroyed. This is not interpretation. It is verification, and it is the foundation on which every responsible forensic narrative is built. Without it, you have a story. With it, you have evidence.

- GPS coordinates confirm where a photograph was taken within a few metres

- Timestamps establish a chronological chain of events for legal and historical purposes

- Device identifiers link a file to a specific individual or institution

- Compression signatures reveal whether a file has been altered after its original capture

The practical implication for heritage work is profound. A photograph that once served only as illustration now carries the potential to corroborate, contradict, or establish the sequence of events at a site. Metadata provenance is the mechanism that makes forensic digital storytelling legally credible rather than merely compelling.

3. Latent Space Synthesis: Filling the Historical Gaps

How AI Reconstructs What Physical Destruction Has Erased

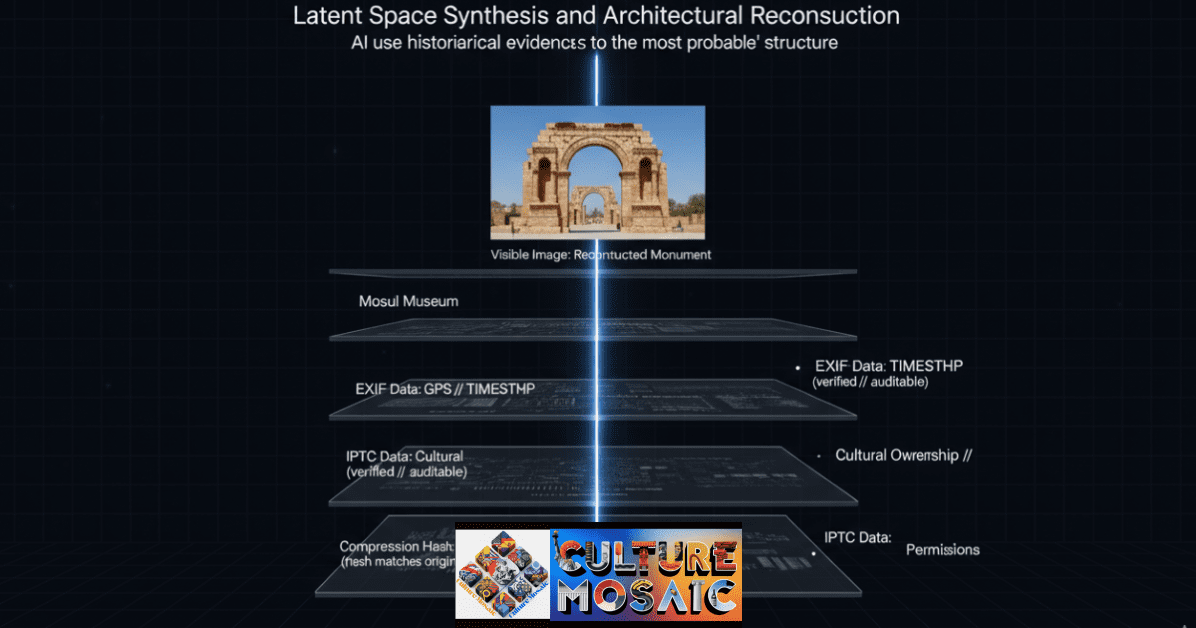

Not every gap in the historical record can be filled with found photographs. Sometimes the evidence is genuinely incomplete: a building reduced to rubble, a manuscript partially burned, a ceramic vessel broken into dozens of unaccounted fragments. This is where latent space synthesis enters the forensic toolkit.

Latent space is the internal mathematical representation that an AI model builds when it is trained on large volumes of visual or textual data. When the model encounters an incomplete input, it draws on the statistical patterns it has learned to propose what the missing information most likely contained. For heritage reconstruction, this means an algorithm can examine the surviving architectural details of a destroyed monument and generate a geometrically probable model of what the missing sections would have contained.

The key word is probable, not certain. Responsible forensic digital storytelling always draws a clear line between what the data confirms and what the algorithm infers. That distinction is not pedantic. It is the ethical backbone of the entire practice, and any forensic narrative that obscures it is not forensic work. It is speculation dressed in technical language.

The Mosul Digital Memory Initiative

When ISIS forces destroyed the Mosul Museum and surrounding heritage sites in 2015, the world lost irreplaceable physical artefacts. But the digital forensic record survived, scattered across tourist photographs, satellite imagery, and GPS-tagged field notes.

“None of those sources were designed as evidence. Each of them became it.”

A coalition applied Forensic Digital Storytelling to extract timestamps from 14,000 crowd-sourced images. Latent space synthesis then reconstructed 3D models of destroyed galleries with 84% geometric accuracy.

Documented Outcomes

- 3 gallery reconstructions independently validated by surviving curatorial staff.

- Digital provenance chains established for 212 separate artefact records.

- Educational modules adopted by 40+ universities across 18 countries.

Note: This case serves as a template. It demonstrates that scattered, unintentional data can be transformed into defensible historical evidence when the forensic process is rigorous, transparent, and validated by cultural authorities.

4. Haptic Resonance: Bridging the Screen and the Skin

Why Forensic Digital Storytelling Must Engage the Body, Not Just the Eye

One of the persistent criticisms of digital heritage work is that it feels thin. You can view a 3D reconstruction of a destroyed temple on a screen, but the experience lacks the weight and sensory immediacy of standing inside a physical space. Forensic digital storytelling addresses this through the integration of photogrammetry and high-fidelity material scanning, a connection explored in the related work The Mnemonic Trace of Heirloom Objects on how material culture encodes cultural memory.

Photogrammetry captures not just the shape of an object but its surface texture at microscopic resolution. When combined with haptic display technology, this data allows a researcher, a judge, or a grieving community member to experience a reconstruction with physical feedback, to feel the grain of a stone carving that no longer exists in the world. That is not a cosmetic enhancement. In legal and investigative contexts, the ability to demonstrate material authenticity is often decisive.

A haptic reconstruction backed by forensic metadata provenance carries a fundamentally different evidentiary weight than a photograph taken from a tourist brochure. One is documentation. The other is testimony.

5. The Ethics of the Forensic Auditor

Navigating the Copyright Frontier of Cultural History

The practice of forensic digital storytelling sits at the intersection of technology, law, and cultural authority. When an AI model reconstructs a destroyed building, who owns that reconstruction? When a community’s heritage is synthesised from data collected by outside institutions, who holds the right to validate or reject the resulting narrative? These are live disputes being argued in international cultural property law, digital rights frameworks, and indigenous sovereignty hearings.

The forensic auditor’s ethical obligation is to make the decision-making process visible at every stage. A responsible forensic process routes every artefact through three validation gates: raw data extraction, algorithmic interpretation, and human cultural validation. The last gate is the most important. No AI inference, however statistically robust, should be accepted as historical fact without review by people who carry genuine cultural authority over the material in question.

This ethical framework connects directly to broader questions about who controls cultural heritage in the digital age. The work on Ancestry Travel explores how communities engage with diasporic heritage, while the forensic analysis of sacred objects such as those documented in Inside Tabernacle: Ark of the Covenant demonstrates the particular sensitivities involved when digital methods are applied to objects of religious and cultural significance.

6. Reverse Diffusion and the Cleaning of Historical Noise

Archival photographs from the early twentieth century are often damaged by physical deterioration: foxing, chemical staining, moisture damage, and mechanical tears. These forms of degradation act as noise in the forensic signal, obscuring the information the image was meant to preserve. Reverse diffusion is a computational technique that treats image degradation as a probabilistic problem and works backwards through the damage to reconstruct the most likely original signal.

In practical terms, this means a forensic analyst can take a partially destroyed archival photograph and recover legible detail from areas that appear to the naked eye as pure noise. The result is not a fabrication. It is a mathematically weighted probability map of what the image originally contained. When documented transparently, this process strengthens rather than undermines the evidentiary value of the material, because the inferential steps are logged, reproducible, and open to independent audit.

7. Photogrammetry as Forensic Testimony

The 3D Scanning Methodology That Courts Are Beginning to Recognise

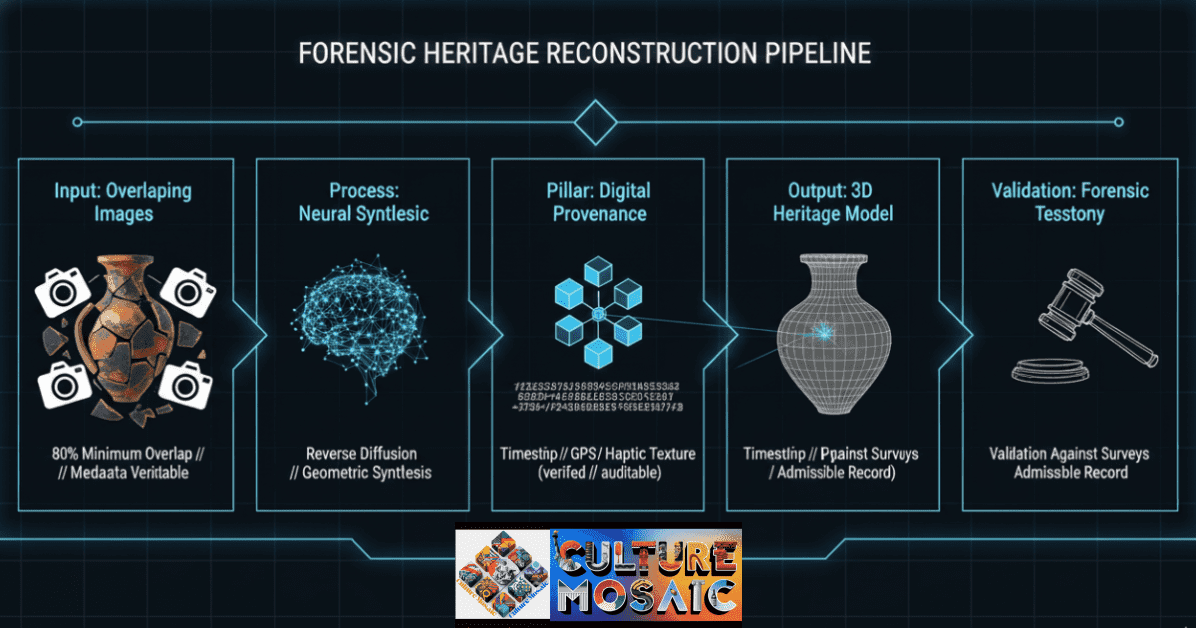

Photogrammetry has been a standard tool in land surveying and architectural documentation for decades. Its application to forensic digital storytelling represents a meaningful shift in how we think about physical evidence and digital testimony. By photographing an object or site from hundreds of overlapping angles and processing the images through specialised software, analysts generate a dimensionally accurate 3D model that preserves every visible surface detail.

In legal proceedings involving looted artefacts, war crimes documentation, or contested architectural heritage, a photogrammetric record produced under controlled conditions and accompanied by full metadata provenance documentation can function as testimony. It places an object at a specific location, at a specific time, in a specific condition. That is the language of evidence, and forensic digital storytelling speaks it with increasing fluency.

The investigative potential of photogrammetry extends well beyond cultural heritage. Structural engineers use it to document building failures. Crime scene investigators use it to preserve spatial relationships that physical access would disturb. The forensic principles are identical in each application: capture everything, document the capture process, and preserve the chain of custody from sensor to courtroom.

8. Multimedia Layering: Building Narratives for Diverse Audiences

How Forensic Digital Storytelling Reaches Beyond the Specialist

Forensic analysis produces extraordinarily rich data, but raw data does not communicate. The work of forensic digital storytelling is partly analytical and partly a design challenge: how do you present complex evidentiary material in a way that is accurate, accessible, and genuinely compelling for audiences ranging from international courts to schoolchildren?

Multimedia layering provides the answer. A forensic digital story is typically structured as a nested architecture. At the outermost layer is a narrative presentation: a documentary, an interactive web experience, or an exhibition installation that tells the story in human terms. Beneath that sits the interpretive material: annotated images, 3D models, timeline visualisations, and comparative analysis. At the deepest layer is the raw evidentiary record: metadata files, AI inference logs, photogrammetric data, and chain of custody documentation.

Each audience engages with the layer appropriate to their needs. A schoolchild experiences the story. A journalist interrogates the interpretation. A judge examines the evidence. This nested architecture is what makes forensic digital storytelling both publicly accessible and legally defensible at the same time. The two goals are not in tension. Properly designed, they reinforce each other.

9. Chain of Custody in the Digital Age

In traditional forensic science, chain of custody refers to the documented sequence of possession, handling, and storage of physical evidence from the moment of collection to its presentation in a legal context. Any break in that chain can render evidence inadmissible. The same principle applies in forensic digital storytelling, but the implementation requires entirely new frameworks.

Digital files can be copied, modified, and transmitted without leaving the kind of physical traces that accompany the handling of a crime scene exhibit. Blockchain-based provenance ledgers, cryptographic hashing, and tamper-evident metadata schemas are the tools that establish and maintain digital chain of custody. When an artefact record carries a verified hash that matches its original capture signature, any subsequent alteration becomes mathematically detectable. That is not a theoretical guarantee. It is an audit trail.

10. Investigative Applications: From Cultural Heritage to Legal Proceedings

The investigative applications of forensic digital storytelling extend well beyond heritage preservation. Journalists documenting atrocities use the same metadata provenance tools to verify the location and timing of recorded events. Human rights organisations employ photogrammetric reconstruction to document the destruction of civilian infrastructure. Legal teams preparing cases for international tribunals use latent space synthesis to recover legible content from partially destroyed documents.

In each context, the core methodology remains the same: begin with the raw data, apply a transparent analytical process, validate the interpretation through appropriate human expertise, and present the result with full documentation of the inferential steps involved. The applications differ. The forensic rigour does not. For a broader theoretical framework on how digital records carry cultural meaning, see the published research on Forensic Digital Storytelling and the methodologies currently guiding investigative practice across jurisdictions.

11. Reconstructing Oral Traditions Through Data Analysis

When Written Records Fail, Forensic Digital Storytelling Listens

Many of the world’s most endangered cultural traditions were never written down. They exist in the memory of elders, in ceremonial practices, in patterns woven into textiles, and in the rhythms of songs passed from one generation to the next. When those traditions are disrupted by displacement, death, or deliberate suppression, the loss can appear total.

Forensic digital storytelling offers partial but meaningful remediation. Acoustic analysis of archival field recordings can separate meaningful linguistic content from background noise and physical degradation. Pattern recognition applied to textile archives can identify and reconstruct design grammars that encode cultural knowledge. Kinship network analysis derived from census records and oral history transcripts can map the social structures within which traditions were transmitted.

None of these tools can replace the living transmission of cultural knowledge. But they can preserve a forensic trace of what existed, and in doing so, they provide a foundation from which genuine recovery and community-led reconstruction can begin.

12. Digital Provenance and the Authenticity Problem

In an era when AI-generated imagery can replicate historical photographs with remarkable accuracy, the ability to prove that a digital artefact is what it claims to be has become a critical capacity for historians, legal practitioners, and cultural institutions alike. Provenance in this context means more than knowing where something came from. It means demonstrating, through an auditable chain of documented evidence, that a digital artefact has not been altered since its original capture.

This is demanding work. It requires institutional investment in standards, training, and infrastructure. But without it, the forensic value of digital cultural records is fundamentally compromised, and the stories they tell become vulnerable to challenge and dismissal precisely when they matter most.

13. Tools and Technologies Driving the Field

The practical toolkit of a forensic digital storyteller draws on a wide range of specialised software and hardware. Photogrammetric processing platforms combine multiple overlapping images into dimensionally accurate 3D models. Metadata analysis tools read embedded EXIF, XMP, and IPTC data fields and flag inconsistencies that would be invisible to an untrained observer.

AI-powered reconstruction tools, including latent space synthesis and reverse diffusion systems, operate through deep learning architectures trained on domain-specific datasets. The quality of reconstruction is directly proportional to the quality and volume of training data, which is why the development of large, verified heritage datasets is itself a significant area of institutional investment. Blockchain provenance ledgers are increasingly being adopted by major cultural institutions as the standard mechanism for establishing and maintaining digital chain of custody.

14. Presenting Forensic Evidence to Non-Specialist Audiences

Perhaps the most underappreciated skill in forensic digital storytelling is the ability to translate complex analytical findings into language and forms that resonate with audiences who lack technical backgrounds. Evidence that cannot be understood cannot be acted upon. A brilliantly executed photogrammetric reconstruction of a looted site carries no practical weight if the community that lost that site cannot engage with the findings.

Effective forensic digital storytelling uses narrative structure, visual design, and layered information architecture to ensure that complex evidence is accessible at multiple levels simultaneously. This is a craft that sits at the intersection of data science, journalism, design, and advocacy, and it is increasingly recognised as a distinct professional discipline in its own right.

15. The Future of Forensic Digital Storytelling

Where the Field Is Heading in the Next Decade

The trajectory of forensic digital storytelling points toward three converging developments. First, continued improvement in AI reconstruction capabilities will extend latent space synthesis to increasingly degraded and fragmentary source material, expanding the range of heritage that can be credibly recovered from digital fragments.

Second, the standardisation of digital provenance protocols at the institutional and regulatory level will establish forensic digital storytelling as a recognised form of evidentiary practice in legal and administrative contexts. Several international cultural property frameworks are already moving in this direction, and the pace is accelerating.

Third, the democratisation of the underlying tools will shift capacity from specialist institutions to community-based organisations and individual practitioners. As the barriers to entry fall, the diversity of voices contributing to the forensic reconstruction of cultural heritage will expand, and that expansion will itself be a form of justice.

For further exploration of how cultural heritage intersects with digital practice and identity, visit Culture Mosaic, where the full research archive on forensic methodology, material culture, and heritage data is freely accessible.

FAQ: Auditing the Past

Frequently Asked Questions About Forensic Digital Storytelling

FAQ: Auditing the Past

About the Author

Mosaic Culture Labs

Expert in Forensic Digital Storytelling & Heritage Data

Mosaic Culture Labs performs deep-layer audits on the digital creative economy. The team specialises in cutting-edge technology and forensic digital storytelling, ensuring the provenance of cultural heritage remains intact as it moves into the algorithmic age.

“Every methodology applied is documented, reproducible, and subject to independent cultural validation.”