Art vs. Algorithm

A forensic audit of the five mechanisms reshaping authorship, ownership, and creative value in the digital age.

The art world is being restructured from the inside out. Walk into any design studio, scroll through social media, or attend a digital art exhibition, and you will encounter a new kind of creative instrument: artificial intelligence. This is not a forecast. It is happening now, and it is forcing a serious reconsideration of what it means to make something, to own something, and to be paid for creative skill.

Tools like DALL-E, Midjourney, and Stable Diffusion do not merely assist artists — they generate images from text descriptions in seconds. That capability has opened creative expression to people who previously had no access to visual production, and it has simultaneously threatened the livelihoods of the professionals who spent years acquiring that access through training and practice.

To understand what is actually at stake, it helps to stop treating this as a single debate and start treating it as five interlocking ones. Each of the following sections addresses one pillar of the transformation that cutting-edge technology has activated inside the creative economy.

5 Pillars of AI Transformation

The five mechanisms driving this shift are:

— Latent Space Synthesis — how AI encodes and recombines visual knowledge

— Reverse Diffusion Mechanics — the technical process that turns noise into imagery

— Prompt Engineering — the craft of directing AI outputs through precise language

— Collaborative Augmentation — the hybrid workflow model where human and machine reinforce each other

— Digital Provenance — the forensic chain of authorship, training data consent, and copyright

Further Reading from Culture Mosaic

The Haptic Resonance of Raw Materials // The Art of Human Distillation // Digital Glitch Art // AI and the Future of Artistic Expressions

Pillar 1 — Latent Space Synthesis: How AI Art Actually Works

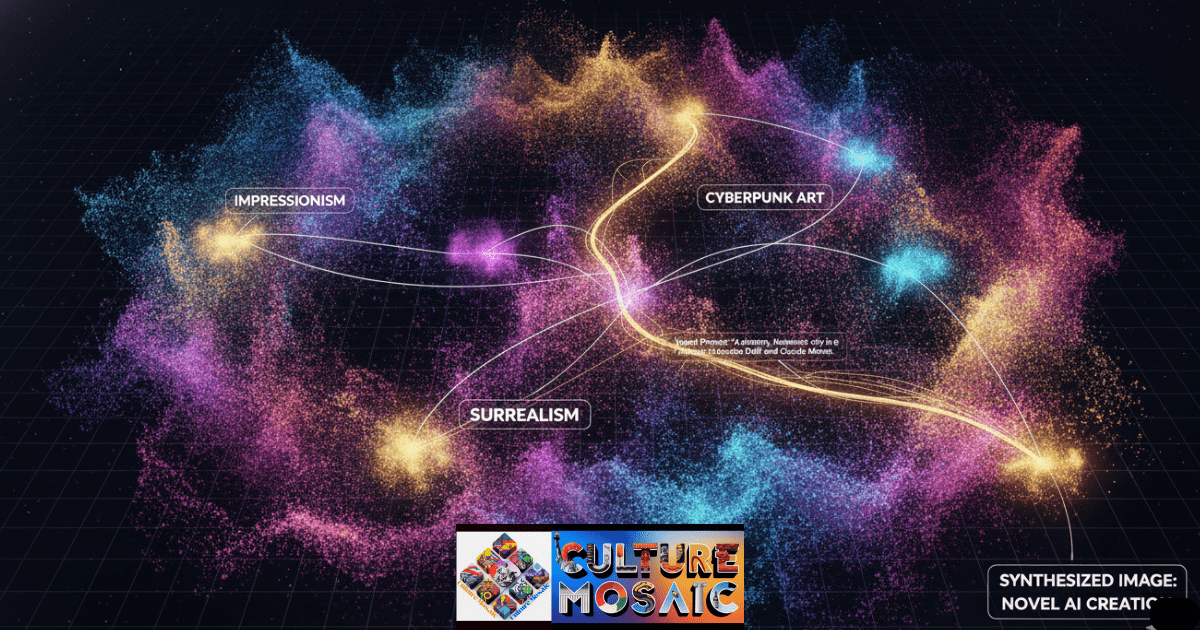

There is a persistent misconception that AI art generators function like a very fast image search engine — that when you type a prompt, the system retrieves the closest match from a database and presents it as original work. This is not what happens, and the distinction matters enormously, both legally and creatively.

What actually occurs takes place inside a high-dimensional mathematical space called a latent space. During training, the model processes hundreds of millions of images and compresses what it learns — not the pixels themselves, but the statistical relationships between visual concepts — into a vast coordinate map. A warm sunset carries a certain vector. Melancholy has a different one. The expressionist tradition occupies a cluster of coordinates that overlaps partially with surrealism but diverges sharply from minimalism.

When a prompt arrives, the model does not look anything up. It calculates a position in that coordinate space based on the language of the prompt and then synthesises an image by decoding the values found at that position. The result is genuinely novel — it has never existed before — but it is built entirely on the statistical residue of work that has. This is the core tension that has driven every copyright dispute in this space, and it is explored with material precision in The Haptic Resonance of Raw Materials.

The question courts will eventually have to answer is not whether AI copied an image, but whether building a profitable coordinate map from copyrighted work — without consent or compensation — constitutes a form of taking. That question remains open.

Pillar 2 — Reverse Diffusion Mechanics: From Noise to Signal

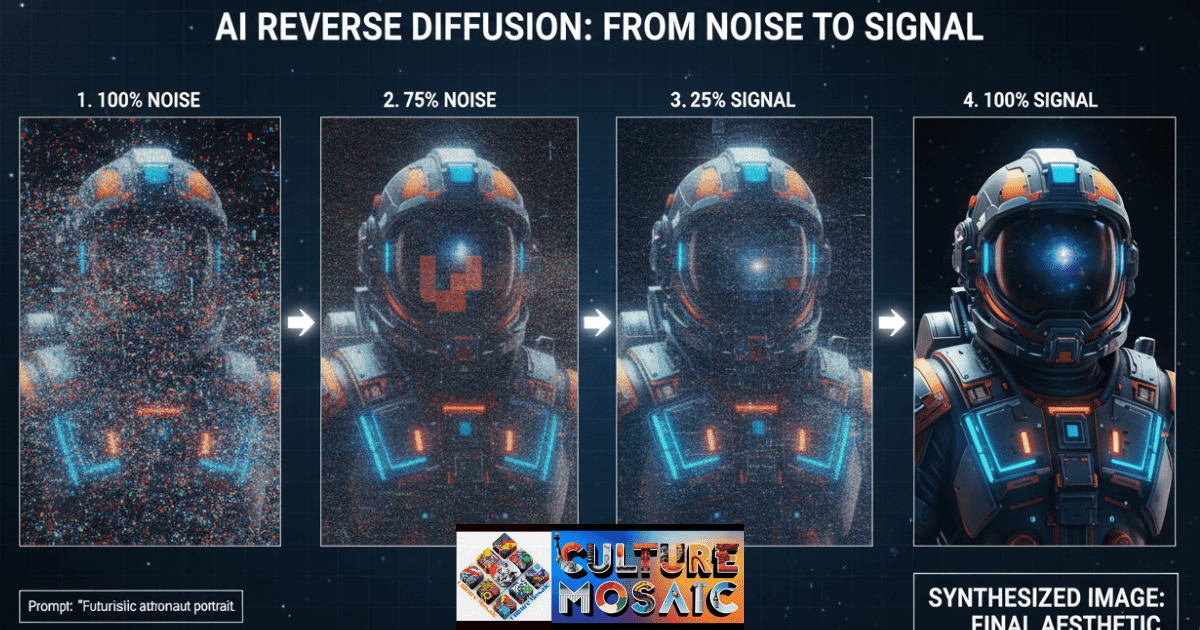

Understanding how diffusion models generate images is not merely a technical curiosity. It reframes the authorship question in ways that matter practically. The dominant family of AI art tools today — Stable Diffusion, Midjourney, DALL-E — all operate on the same principle: reverse diffusion.

In training, the model is shown clean images and learns to predict the noise that would have been added to corrupt them. Inversion of that process at inference time is the generative act. Starting from a canvas of pure random noise, the model runs hundreds of iterative steps, removing a small, calculated amount of noise at each pass, guided by the prompt. What emerges — gradually, like a photograph developing in a darkroom — is a coherent image that did not exist before the process began.

At the start of that process, at maximum noise, there is no image information at all. At the end, the model has made thousands of micro-decisions, each guided by the prompt. The question of who authored those decisions — the person who wrote the prompt, the team that trained the model, the artists whose work shaped the training data — is not rhetorical. It is the question the legal system is being asked to answer in real time.

The visual territory this creates is adjacent to, but categorically distinct from, Digital Glitch Art, which deliberately embraces artefact and error. Diffusion models aim to erase noise entirely. Glitch artists work with it. Both practices foreground process as meaning, but arrive at very different relationships with the viewer.

Pillar 3 — Prompt Engineering: Craft, Curation, or Something Else?

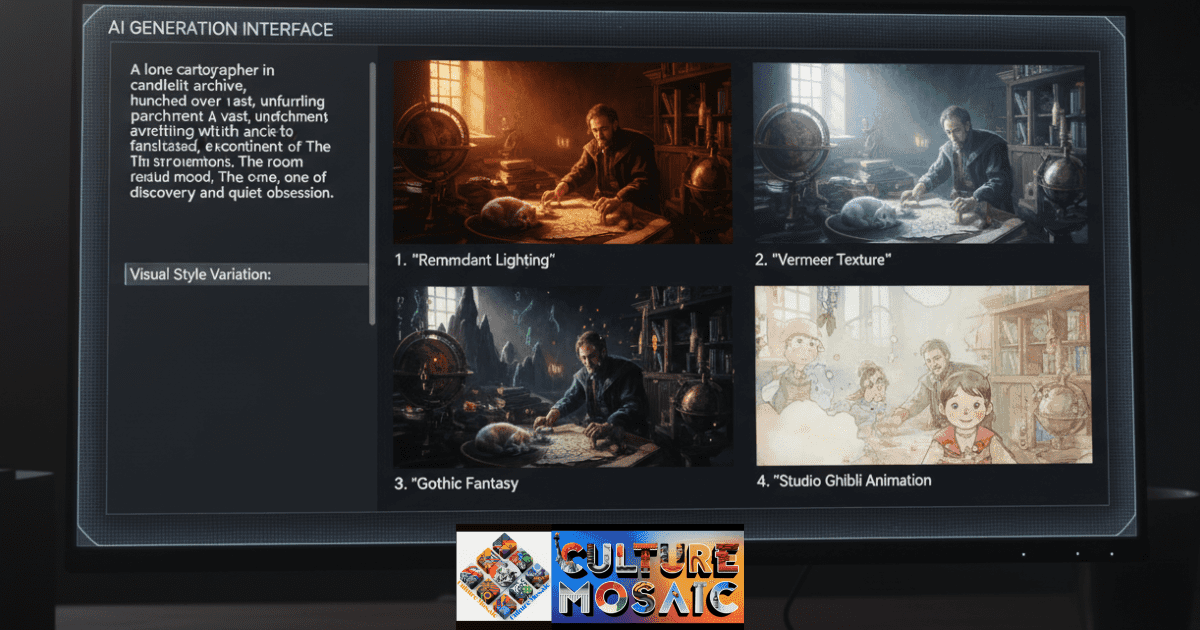

I have spent time in rooms with skilled prompt engineers, and I want to be precise about what I saw. These are not people typing vague descriptions and hoping for the best. They are running controlled experiments. They track which adjectives reliably invoke certain lighting conditions. They know that the phrase ‘volumetric fog’ behaves differently in Midjourney than in Stable Diffusion. They maintain prompt libraries the way a cinematographer maintains a lens kit.

A production-grade prompt for a serious project might look like this: ‘A lone cartographer in a candlelit archive, surrounded by unrolled maps of coastlines that no longer exist — dramatic Rembrandt lighting from upper left, dusty atmospheric haze, muted ochre and sienna palette, painterly texture suggesting Vermeer, medium-format photography depth of field, 8K.’ Every clause is a calculated instruction, not a wish.

The US Copyright Office’s current position — that AI output lacking substantial human creative input beyond the prompt cannot be copyrighted — does not fully resolve this. The operative word is ‘substantial.’ A prompt that specifies lighting, palette, compositional depth, art historical reference, and technical rendering parameters is making a large number of creative decisions. Whether that clears the threshold for authorship is a genuinely open question, not a settled one.

The deeper issue is one of intentionality and understanding — themes explored closely in The Art of Human Distillation. A human artist who paints a melancholy landscape understands melancholy. The prompt engineer who instructs an AI to render one understands the aesthetics of melancholy well enough to describe it precisely. Whether those two relationships to the subject constitute the same kind of authorship is, at minimum, worth debating seriously rather than dismissing.

Pillar 4 — Collaborative Augmentation: The Hybrid Workflow

Cutting-edge technology does not have a single relationship with creative practice. The version that threatens livelihoods — the client who cancels a commission because an AI generated something adequate overnight — is real, and the economic pain it causes is documented and serious. But there is another version that deserves equal attention.

Working illustrators are using AI to generate twenty rough compositional sketches in an hour, then spending the rest of the day developing the one that interests them. Set designers are building reference libraries for productions that previously required months of physical research. Type designers are exploring letterform variations at a scale that would have taken years manually.

In each of these cases, the AI is operating as a high-speed, high-range drafting tool. The creative decisions — which direction to develop, which references carry meaning, what the final work should communicate — remain with the human. This is not a consolation prize or a compromise. It is a legitimate and productive relationship between a practitioner and an instrument.

The practitioners most at risk are those whose value proposition was execution speed and technical consistency. AI excels at both. The practitioners least at risk are those whose value proposition is distinctive vision, client relationship, and cultural judgment. For the creative field as a whole, that distinction may force a clarifying question about what clients have always been paying for — and what they actually want.

Pillar 5 — Digital Provenance: Authorship, Ethics & the Copyright Frontier

Provenance is a concept with deep roots in the art world. For physical works, it means the chain of ownership from studio to collector to museum. For AI-generated work, it needs to mean something different and something more: the chain of creative decisions that brought a piece into existence, and the chain of permissions — or lack thereof — that allowed the training data to be used.

The lawsuits currently working through US courts are not, at their core, about copyright infringement in the conventional sense. No single image was copied. What was extracted, at enormous scale and without compensation, was the accumulated skill embedded in copyrighted work — the decision-making, the refinement, the years of practice that make an artist’s style recognisable and valuable. Whether the law as currently written can address that is genuinely uncertain. Whether it should address it is considerably less uncertain.

Under current US guidance, purely AI-generated output belongs to nobody. The companies selling access to these tools cannot copyright what those tools produce. The output is in the public domain immediately. That is a curious and underappreciated fact that has practical consequences for anyone building a business around AI-generated imagery.

Move along the spectrum toward substantial human intervention — selecting, arranging, editing, compositing, repainting — and copyright protection becomes available for the human contribution. The AI layer remains unprotected. The human layer can be claimed. If you intend to assert authorship over AI-assisted work commercially, document your creative decisions carefully. That documentation is your provenance.

The Question of Creative Authenticity

I want to address a specific claim directly: the idea that AI-generated work cannot be meaningful because the system has no inner life. This argument is intuitive but philosophically imprecise. We do not require the camera to have experienced a landscape in order to find a photograph of it moving. We do not require the printing press to have understood grief in order to be affected by a printed poem. The tool’s inner life has never been the criterion for whether work made with it is valuable.

The more serious version of the authenticity concern is about intentionality and irreducibility. When a human artist makes a work about loss, every decision — the colour chosen, the line left incomplete, the reference withheld — is potentially meaningful, because it was made by someone who has experienced loss and who wanted to communicate something specific about it. The work is, in that sense, irreducible to its formal properties. It carries the trace of a consciousness.

An AI system generating a ‘melancholy’ image has access to the statistical signature of melancholy in the training data. It does not have access to the thing itself. Whether that absence matters depends on what you believe art is fundamentally for. If art is primarily a channel for human consciousness to reach another human consciousness, then the absence is categorical. If art is a structured experience that produces certain effects in viewers, the absence may be irrelevant. Both positions are held by serious people, and neither can be resolved by looking at the image alone.

This is the question at the heart of AI and the Future of Artistic Expressions. What it does, regardless of how it resolves, is force us to articulate with unusual precision what we value when we value art. That process of articulation may prove to be one of the most productive consequences of a genuinely disruptive moment.

Regulatory and Industry Responses

The regulatory landscape is moving, though not quickly enough for the artists most immediately affected. The EU AI Act introduces transparency obligations and, in its creative applications provisions, begins to address the question of training data consent. Whether European standards will propagate globally — as GDPR arguably did — remains to be seen, but the precedent is worth watching.

Cutting-edge technology companies have responded with varying degrees of seriousness. Adobe’s Firefly model was trained exclusively on licensed and public-domain content — a significant production choice that constrains the model’s range but removes the provenance problem entirely. Getty Images has pursued litigation. Smaller platforms have introduced opt-out mechanisms that critics correctly note do nothing for already-trained systems.

Professional guilds representing illustrators, photographers, and visual artists have proposed licensing frameworks modelled on music performance rights: a mechanism by which AI companies contribute to a compensation pool distributed to artists based on style-usage metrics. Whether that is technically implementable or legally viable remains under discussion, but the principle — that artists whose work trained these systems deserve a share of the value created — is gaining genuine traction in policy circles.

Frequently Asked Questions

Is AI-generated art legal to use commercially?

The short answer is yes, with significant caveats. Purely AI-generated images currently lack copyright protection in the United States, meaning no one owns them — including the platform that generated them. You can use them commercially, but you cannot prevent others from using identical outputs. The more significant legal exposure lies upstream: if the model was trained on artists’ copyrighted work without licence, ongoing litigation may affect commercial platforms’ terms of operation. Until case law settles, commercial use carries residual risk, particularly for work that visually references an identifiable artist’s style.

Will AI replace human artists completely?

No — though that answer requires qualification. ‘Replace’ implies a clean substitution, and creative work does not behave that way. What is happening is a revaluation: skills that were rare because they were time-consuming are becoming less commercially valuable because AI performs them adequately. Skills that are rare because they require sustained vision, cultural fluency, and relationship retain value precisely because AI cannot provide them. The transition will be painful for practitioners in the middle tier. It does not portend the end of human creative practice.

Who owns the copyright to AI-generated images?

Under current US guidance: nobody, in the case of purely machine-generated output. The US Copyright Office has been consistent that copyright requires human authorship, and a text prompt does not meet that threshold on its own. If you substantially edit, composite, or repaint an AI-generated base image, the human-authored elements of the resulting work may be copyrightable — but the underlying AI output remains in the public domain. Document your creative decisions carefully if you intend to assert authorship over AI-assisted work.

How can traditional artists compete with AI art generators?

By being legible about what AI cannot do. Build a practice that is visible in its humanity — show process, show iteration, show the specific cultural knowledge and personal history that inflects your decisions. Client relationships built on genuine creative dialogue are not easily replaced by a subscription tool. Artists who have struggled to articulate their value beyond technical execution now have a compelling reason to do so. That articulation is not a retreat. It is a clarification that was overdue.

What ethical concerns should I consider when using AI art tools?

Three primary ones. First, training data provenance: most commercial AI art systems were trained on copyrighted work without consent or compensation, and using them participates in that economy. Second, labour displacement: if your use case directly substitutes for work you would previously have commissioned from a human practitioner, it is worth asking whether the efficiency gain justifies the impact. Third, transparency: presenting AI-assisted work as entirely human-made is a form of misrepresentation that is becoming increasingly detectable and increasingly consequential for professional reputation.

About the Author

Mosaic Creative Labs monitors the intersection of algorithmic synthesis and the creative economy. Our focus is the digital provenance of AI-generated work and the ethical integration of cutting-edge technology in global design studios and cultural institutions. Published on Culture Mosaic.